In part 1, I explained how to duplicate a single instance database using RMAN. This article will focus on converting the single instance to RAC.

2. Convert Single instance to RAC

We have migrated the database successfully from Non-ASM to ASM using RMAN; the database is still running as single instance. Now we need to convert the single instance database to RAC.

2.1 Create redo and undo for second instance

Each individual instance requires an independent set of redo and undo segments. Generally speaking, redo and undo are handled on a per instance basis. You must create two redo log groups for each instance and all redo groups must be stored on shared devices for an instance or crash recovery purpose. Each instance can have more than two redo log groups, but two are minimum per instance.

Each instance is assigned a thread number starting at 1, when you add more logfiles make sure you mention the THREAD, so that logfiles will be added to right instance.

Create second thread of online redo logs in order to start instance 2 and enable thread 2. It is always recommended to create redo log groups across disk groups for redundancy:

|

1 2 3 4 5 6 7 8 9 10 |

SYS@ORADB> alter database add logfile thread 2 group 3 ('+DATA’,'+FLASH’) size 50m reuse; Database altered. SYS@ORADB> alter database add logfile thread 2 group 4 ('+DATA’,'+FLASH’) size 50m reuse; Database altered. SYS@ORADB> alter database enable public thread 2; Database altered. |

Create undo tablespace for second instance using recommended automatic undo management feature. Each instance undo tablespace must be shared by all other instances for recovery:

|

1 |

SYS@ORADB> create undo tablespace UNDOTBS2 datafile '+DATA' size 500M; |

If you have more than two nodes then you need to repeat the steps on each node in the cluster.

2.2 Add cluster related parameters

The duplicated instance does not have any cluster related parameters, we need to add the cluster parameter in order to convert single instance to RAC. The CLUSTER_DATABASE=true parameter needs to set before a database can be started in cluster mode.

The CLUSTER_DATABASE_INSTANCES parameter specifies the number of instances configured as part of the cluster database. You should set this parameter value to the number of instances in your Real Application Cluster.

The INSTANCE_NUMBER is a unique number that maps instances to database.

Add below cluster related parameters to initORADB.ora:

|

1 2 3 4 5 6 7 8 9 10 11 |

*.cluster_database_instances=2 *.cluster_database=true *.remote_listener='LISTENERS_ORADB’ ORADB1.instance_number=1 ORADB2.instance_number=2 ORADB1.thread=1 ORADB2.thread=2 ORADB1.undo_tablespace='UNDOTBS1' ORADB2.undo_tablespace='UNDOTBS2' #update the actual controlfile path *.control_files='+DATA/ORADB/controlfile/current.256.666342941','+FLASH/ORADB/controlfile/current.256.662312941' |

Copy the updated init.ora file to node2 and rename the files as per instance name.

|

1 2 |

[oracle@orarac1]$ mv initORADB.ora initORADB1.ora [oracle@orarac2]$ mv initORADB.ora initORADB2.ora |

To make it easier to configure the tnsnames.ora and listener.ora manually for RAC environment, use Network Configuration Assistant (NETCA) to configure these files.

Drop the static listener created during the duplication process and launch Network Configuration Assistant (NETCA) on node1 and create the listener and tnsnames.ora entries. The netca starts the listener at the end of the configuration.

Prior 11g R2, it is recommended to run the listener from the ASM home and from11gR2 it is recommended to run the listener from the Grid Infrastructure home.

You can refer to the link below for configuring and administering Oracle net listener:

https://docs.oracle.com/database/121/NETAG/listenercfg.htm#NETAG010

|

1 2 |

Shutdown the database SYS@ORADB> > shutdown immediate |

2.3 Update the environment and start the database

Set the environment variable for each instance, recreate the password file and start the instances individually on two different nodes.

Environment variable setup on node1:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 |

[oracle@orarac1]$ export ORACLE_SID=ORADB1 [oracle@orarac1]$ export ORACLE_HOME=/home/oracle/product/v10204 [oracle@orarac1]$ orapwd file= $ORACLE_HOME/dbs/orapwORADB1 password=xxxxxxx [oracle@orarac1]$ sqlplus /nolog SQL*Plus: Release 10.2.0.4.0 - Production on Thu Jan 19 19:01:29 2012 Copyright (c) 1982, 2007, Oracle. All Rights Reserved. SYS@ORADB1> connect /as sysdba Connected to an idle instance. SQL>startup ORACLE instance started. Total System Global Area 541065216 bytes Fixed Size 2085288 bytes Variable Size 289410648 bytes Database Buffers 239075328 bytes Redo Buffers 10493952 bytes Database mounted. Database opened. SQL> select instance_name from v$instance; INSTANCE_NAME ---------------- <strong>ORADB1</strong> SQL> |

Environment variable setup on node2:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 |

[oracle@orarac2]$ export ORACLE_SID=ORADB2 [oracle@orarac2]$ export ORACLE_HOME=/home/oracle/product/v10204 [oracle@orarac2]$ orapwd file= $ORACLE_HOME/dbs/orapwORADB2 password=xxxxxxx oracle@orarac2]$ sqlplus /nolog SQL*Plus: Release 10.2.0.4.0 - Production on Thu Jan 19 19:02:29 2012 Copyright (c) 1982, 2007, Oracle. All Rights Reserved. SYS@ORADB1> connect /as sysdba Connected to an idle instance. SQL>startup ORACLE instance started. Total System Global Area 541065216 bytes Fixed Size 2085288 bytes Variable Size 289410648 bytes Database Buffers 239075328 bytes Redo Buffers 10493952 bytes Database mounted. Database opened. SQL> select instance_name from v$instance; INSTANCE_NAME ---------------- <strong>ORADB2</strong> SQL> |

Users may receive the below error, which is the most common error:

|

1 2 3 4 5 6 7 8 9 10 11 |

[oracle@orarac1]$sqlplus /nolog SQL*Plus: Release 10.2.0.4.0 - Production on Thu Jan 19 20:26:33 2012 Copyright (c) 1982, 2007, Oracle. All Rights Reserved. SQL> connect / as sysdba Connected to an idle instance. SQL> startup ORA-01078: failure in processing system parameters LRM-00109: could not open parameter file '/home/oracle/product/v10204/dbs/initORADB.ora' |

To avoid this error make sure to set the environment variable correctly.

2.4 Register the RAC instances with CRS

The Server Control Utility(SRVCTL) is a command line interface that you can use to manage RAC databases, ASM instances etc. from a single point. Using SRVCTL, you can stop and start the database and instances, delete or move instances and services. The SRVCTL stores configuration data in the Oracle Cluster Registry (OCR), you can also use this tool to add services and manage service configuration.

In order to manage Oracle instances using SRVCTL, you need to register the RAC instances with CRS. The CRS should be able to manage the RAC instances when you register. If the servers are rebooted due to planned or unplanned maintenance, the CRS automatically starts up the instances during the reboot.

Register the database instances with CRS framework using this command:

|

1 2 3 |

[oracle@orarac1]$ srvctl add database -d ORADB -o /home/oracle/product/v10204 [oracle@orarac1]$ srvctl add instance -d ORADB -i ORADB1 -n orarac1 [oracle@orarac1]$ srvctl add instance -d ORADB -i ORADB2 -n orarac2 |

Now the instances are registered with CRS, use SRVCTL to stop and start the database. Once the daetabase has been started with SRVCTL the conversion process is complete.

Stop the database using this command:

|

1 |

[oracle@orarac1]$ srvctl stop database -d ORADB |

Start the database using this command:

|

1 |

[oracle@orarac1]$ srvctl start database -d ORADB |

Use the following command to check the status of all instances converted RAC database:

|

1 2 3 4 5 6 7 |

SQL> select * from v$active_instances INST_NUMBER INST_NAME ----------- ----------------- 1 ORADB1 2 ORADB2 |

Check the status of all resources:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

[oracle@orarac1]$ crs_stat -t Name Type Target State Host ------------------------------------------------------------ ora....B1.inst application ONLINE ONLINE orarac1 ora....B2.inst application ONLINE ONLINE orarac2 ora.ORADB.db application ONLINE ONLINE orarac2 ora....SM1.asm application ONLINE ONLINE orarac1 ora....r1.lsnr application ONLINE ONLINE orarac1 ora....ac1.gsd application ONLINE ONLINE orarac1 ora....ac1.ons application ONLINE ONLINE orarac1 ora....ac1.vip application ONLINE ONLINE orarac1 ora....SM2.asm application ONLINE ONLINE orarac2 ora....r2.lsnr application ONLINE ONLINE orarac2 ora....ac2.gsd application ONLINE ONLINE orarac2 ora....ac2.ons application ONLINE ONLINE orarac2 ora....ac2.vip application ONLINE ONLINE orarac2 |

The State must be “ONLINE” for all resources.

The crs_stat command deprecated in 11gR2, if your database is 11g R2 then use this command to check the status:

|

1 |

$ crsctl status resource –t |

In order to shutdown CRS you can run the following command on each node in the cluster. This command will stop Oracle HA Services and Clusterware Stack in a single command:

|

1 |

#crsctl stop crs |

From 11g R2 onwards, you can do this in two stops:

- Stop Clustwerware stack on local node:

1#crsctl stop cluster

- You can stop the clusterware stack on all nodes in the cluster:

1# Crsctl stop cluster –all

2.5 Create the spfile in ASM

SPFILE simplifies administration, and maintains consistent parameter settings. SPFILE is a binary file and lets you make persistent changes to individual parameters. Use the CREATE SPFILE statement to create a server parameter file from PFILE with SYSDBA/SYSOPER privilege. By default PFILE or SPFILE default location is “$ORACLE_HOME/dbs” for UNIX and LINUX, “%ORACLE_HOME%\database” for Windows. In the case of RAC, the best practice is creating SPFILE in ASM shared storeage:

|

1 |

SQL> create spfile=’+FLASH/spfileORADB.ora from pfile; |

Restart the database in order to take effect of spfile.:

|

1 2 |

[oracle@orarac1]$ srvctl stop instance -d ORADB [oracle@orarac1]$ srvctl start database -d ORADB |

2.7 Cluster Verify

The Cluster Verification Utility will be used to perform system checks in preparation for installation, system changes or patch updates. Run CLUVFY utility to check everything is fine after converting single instance to RAC:

Verify your Oracle Clusterware using “cluvfy” and fix issues, if any.

For ex: – The following command checks the complete oracle clusterware stack:

$ cluvfy comp crs- n all – verbose

Where -n all option is to verify all of the cluster nodes – verbose argument produces detailed output of individual checks.

Please refer Oracle documentation on Cluster Verify Utility for more information:

http://docs.oracle.com/cd/B28359_01/rac.111/b28255/cvu.htm

2.6 Check the logs

It is strongly advised to check all the logs related to Cluster, Database and instances when you perform installation, system changes or patch updates…etc. Make sure to check the log files to see the unexpected issues, if any.

CRS_HOME/log/hostname/crsd/ – The log files for the CRS daemon

CRS_HOME/log/hostname/cssd/ – The log files for the CSS daemon

CRS_HOME/log/hostname/evmd/ – The log files for the EVM daemon

CRS_HOME/log/hostname/client/ – The log files for the Oracle Cluster Registry (OCR)

CRS_HOME/log/hostname/racg/ – The log files for the Oracle RAC high availability component

CRS_HOME/log/hostname/racg – The log files for the Oracle RAC high availability component

CRS_HOME/log/hostanme/alert.log – The alert.log for Clusterware issues.

Please note that the CRS_HOME is the directory in which the Oracle Clusterware software was installed and hostname is the name of the node.

2.7 Setup Backup for RAC Database Using RMAN

Using RMAN for Oracle RAC database backup is a recommended and easy method as it does not require any separate installation. RMAN enables you to backup, restore and recover data files, control files, redo log files and spfile. RAC database backup is the same as backing up single instance but the best practice is to backup into a shared file system.

You can setup the backup from any instance in the Cluster and you are able to restore and recovery database from any instance in the cluster.

You can configure multiple channels and scale up the database backup load on multiple RAC instances:

|

1 2 |

CONFIGURE CHANNEL DEVICE TYPE [DISK|SBT] CONNECT '@RAC1' CONFIGURE CHANNEL DEVICE TYPE [DISK|SBT]CONNECT '@RAC2' |

You can configure channels to use automatic load balancing:

|

1 |

CONFIGURE DEVICE TYPE [DISK|SBT] PARALLELISM no_of_channels; |

Take advantage of parallelism for faster restore/recovery operations by setting RECOVERY_PARALLELISM

RMAN can be configured to automatically backup the control file and spfile whenever the database structure in the control file changes and whenever a backup record is added. The autobackup feature enables RMAN to recover the database even if the current control file, catalog, and server parameter file are lost:

Sample script to backup database:

|

1 2 3 4 5 6 7 8 9 10 |

RMAN> run { allocate channel d1 type disk; allocate channel d2 type disk; backup tag 'full_backup' database format 'shared_storage/ORACLE_SID_%d_t%t_s%s_p%p.rmn'; release channel d1; release channel d2; } exit; |

Oracles 11g introduced many exciting new features and information can be found here:

Oracle RMAN New Features in 11g

If you need to backup your database to tape then you need Media management library. Please refer to the following document for configuring RMAN to backup database to a media manager:

http://docs.oracle.com/cd/B28359_01/backup.111/b28270/rcmconfb.htm#i1006526

In many situations DBAs end up with an incomplete or failed recovery. The main reason for this issue is users don’t know what was backed up and what needs to be backed up for a successful recovery. Please refer to the following document to understand Oracle RMAN Reporting:

http://www.oracleracexpert.com/2011/06/understand-oracle-rman-reporting.html

2.8 Workload Management

Server pools (From 11g R2) – You can define a database to run in a server pool. A Server pool is a logical entity, an administrator can allocate resources to specific applications. Refer to the following document on server pools:

http://docs.oracle.com/cd/E11882_01/server.112/e24611/apqos_intro.htm#APQOS117

Service – Use service for workload management and you can hide the Oracle RAC complexity by providing a single system image to manage work load. A service can span one of more instances of a database and an instance can support multiple services. Refer to the following document to understand Oracle Net Service architecture:

https://docs.oracle.com/database/121/NETAG/intro.htm#NETAG001

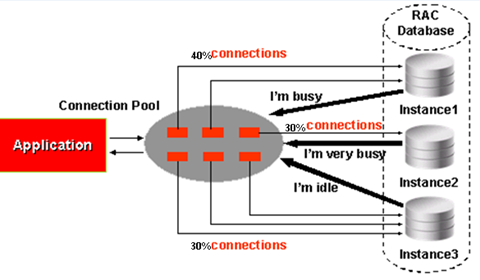

Load Balancing – Oracle Net service provides the connection load balancing and from 11g R2 onwards you can use SCAN (single client access name). Based on goal defined for the service, the listener chooses the database instance that will best meet the goal and the connection is routed to that instance through the local listener.

Refer to the following document to understand SCAN and Client service connections:

https://docs.oracle.com/cd/E11882_01/rac.112/e41959/admin.htm#CWADD838

Fast Application Notification (FAN) – FAN provides integration between RAC database and application. It allows the application to be aware of the current configuration of the server pools so that application connections are made to those RAC instances that are currently able to respond. Refer to the following document to understand FAN:

http://docs.oracle.com/cd/E11882_01/rac.112/e17264/configwlm.htm#TDPRC295

Alternative methods to convert single instance to RAC

Oracle provides the following methods to convert a single instance database to RAC:

- DBCA

- RCONFIG (from 10gR2)

- Enterprise Manager

- Manual (Using RMAN)

Refer to the following document to learn about these alternate methods: https://docs.oracle.com/database/122/RACAD/converting-single-instance-oracle-databases-to-oracle-rac-and-oracle-rac-one-node.htm#RACAD8851

Conclusion

Oracle Real application cluster is designed for Scalability and high availability and many customers implement RAC for their mission critical applications. Oracle RAC provides continuous service for both unplanned and planned outages and it allows the enterprise applications to grow in any direction by protecting from software and hardware failures, and ensures continuous data access.

Load comments